Follow-Me Gesture-Controlled Drone System

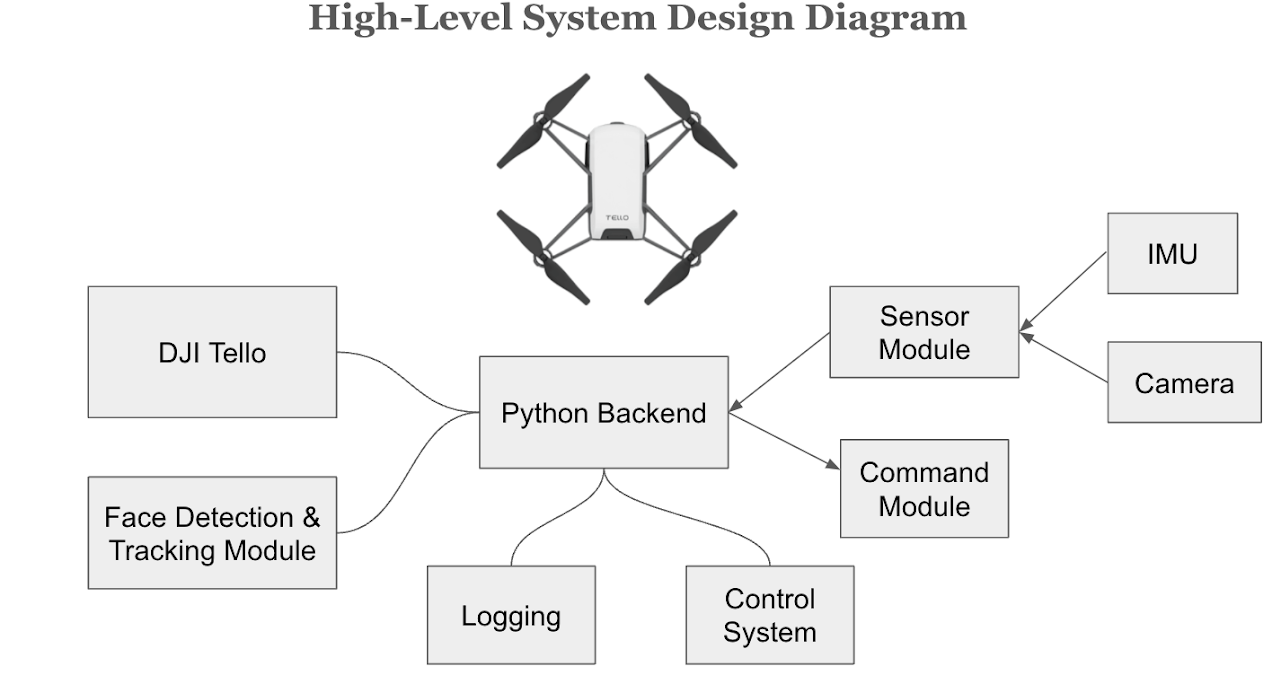

An autonomous drone system that follows a person using real-time face tracking and responds to hands-free gesture commands for media capture. Built on the DJI Tello platform with PID-based feedback control, Haar Cascade face detection, and IMU-based snap gesture recognition via a custom sensor glove.

Designed for scenarios where both hands are occupied — such as mountain biking or kayaking — eliminating the need for a separate controller operator. The drone autonomously maintains a consistent spatial relationship with the target, recovers when the face leaves the frame, and executes media commands triggered by hand snaps.

Demo Walkthrough

- Program starts — drone scans the room by rotating until a face is detected

- Face locked — drone adjusts yaw, height, and distance to maintain a constant transform

- Target exits frame — drone remembers last known position and rotates in exit direction to reacquire

- Single snap gesture — drone flies a circular path while recording panoramic video, then resumes tracking

- Double snap gesture — drone captures and saves a still photo

Team & My Role

During the course project I built the media control module (panoramic recording, photo capture, command dispatch), contributed to the face detection & following module, and ran end-to-end system testing. After the course ended I independently refactored and rewrote the entire codebase — restructuring modules, cleaning up architecture, and adding future-improvement infrastructure. The current open-source repository reflects almost entirely my post-course rewrite.

System Architecture

Key Features

- Real-time face tracking — PID-controlled 3-axis movement (yaw rotation, vertical positioning, forward/backward distance) to keep the target centered in frame

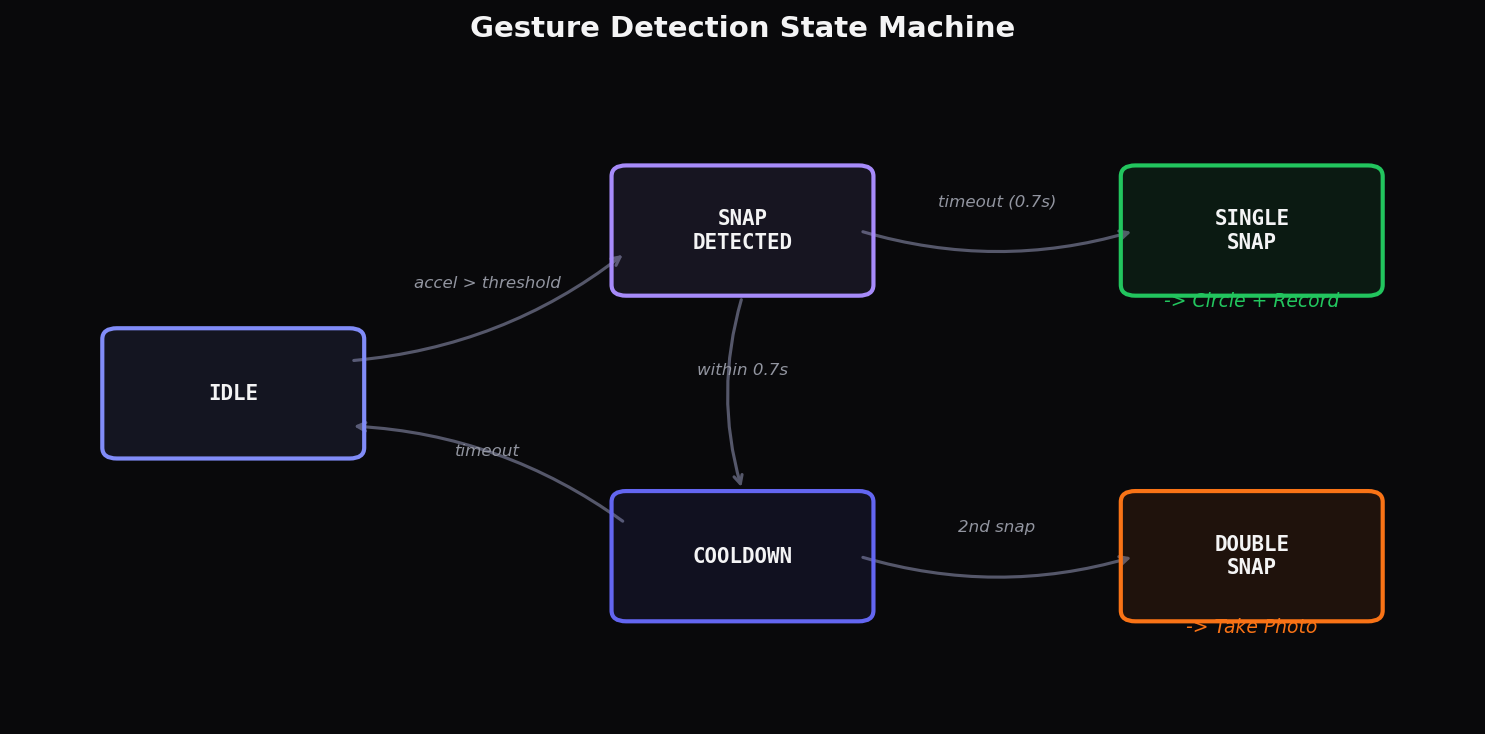

- Gesture commands — IMU-based snap detection: single snap triggers circular panoramic video recording, double snap captures a still photo

- Lost-face recovery — When the subject moves out of frame, the drone automatically rotates in the last known direction to relocate the face

- Panoramic recording — Circular flight path with simultaneous video capture, then automatic return to face-tracking mode

- Indoor safety — Configurable height limit (default 220cm) prevents ceiling collisions during indoor operation

- Dual-process architecture — Gesture recognition and drone control run as independent processes communicating via atomic file-based IPC, preventing synchronization delays

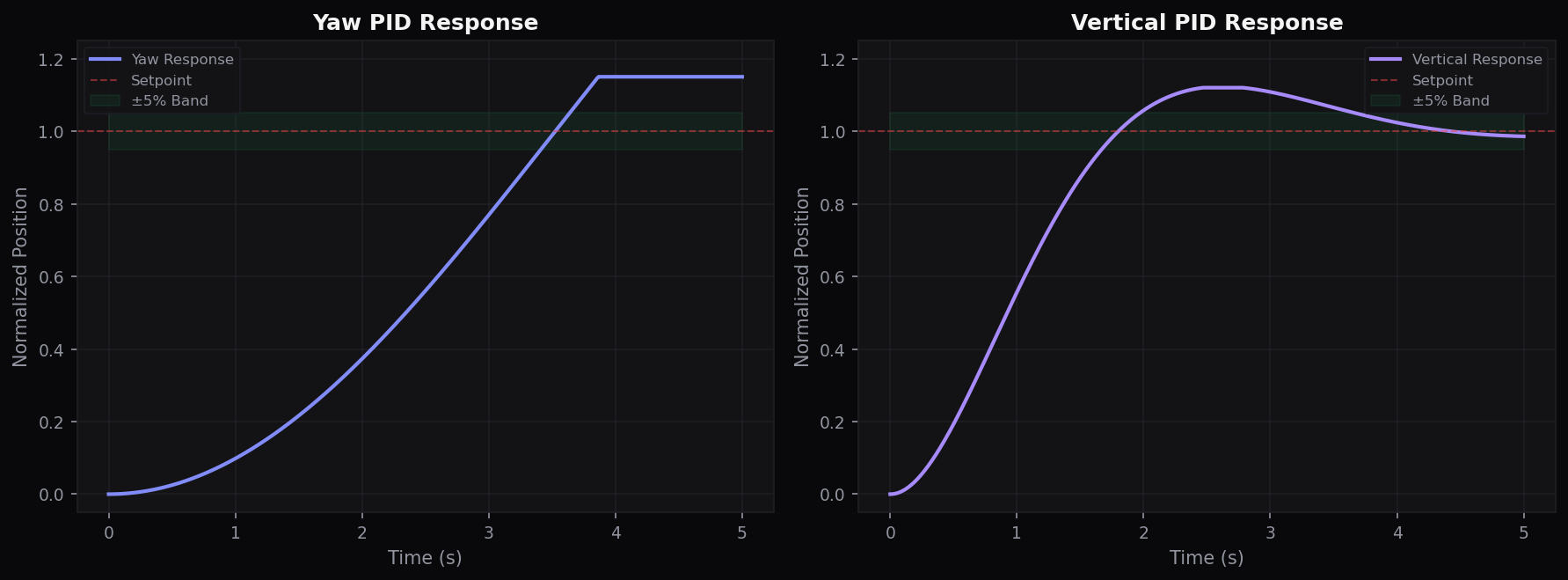

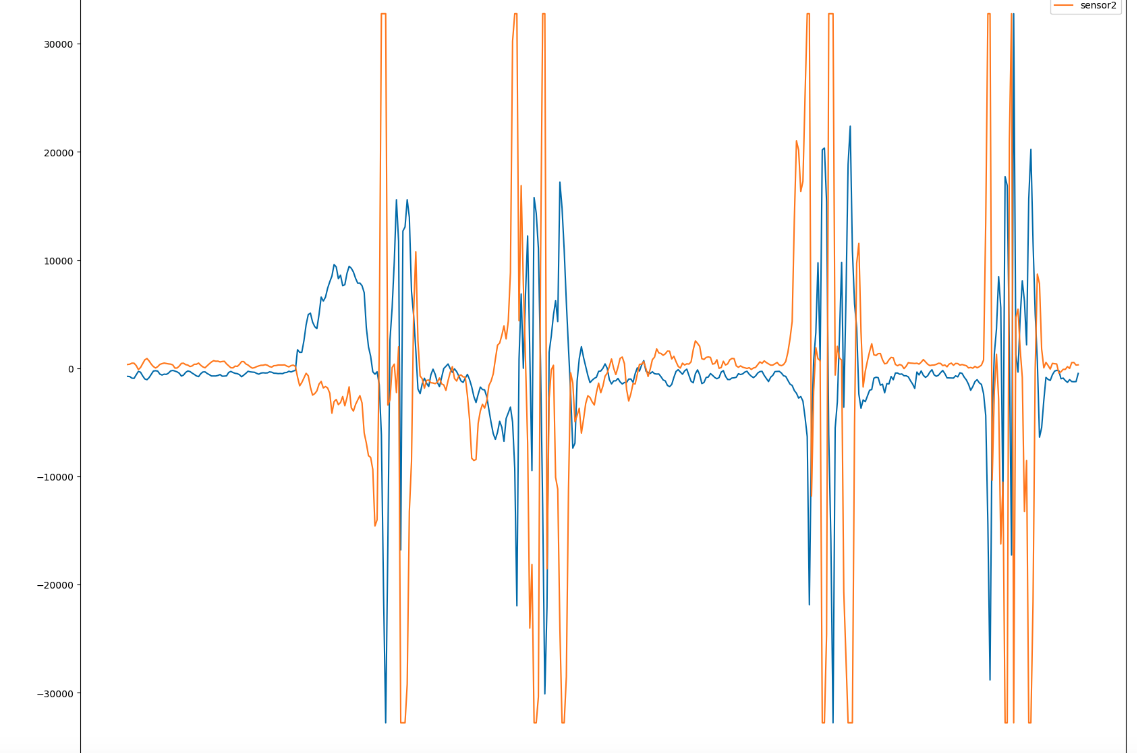

PID Control Response

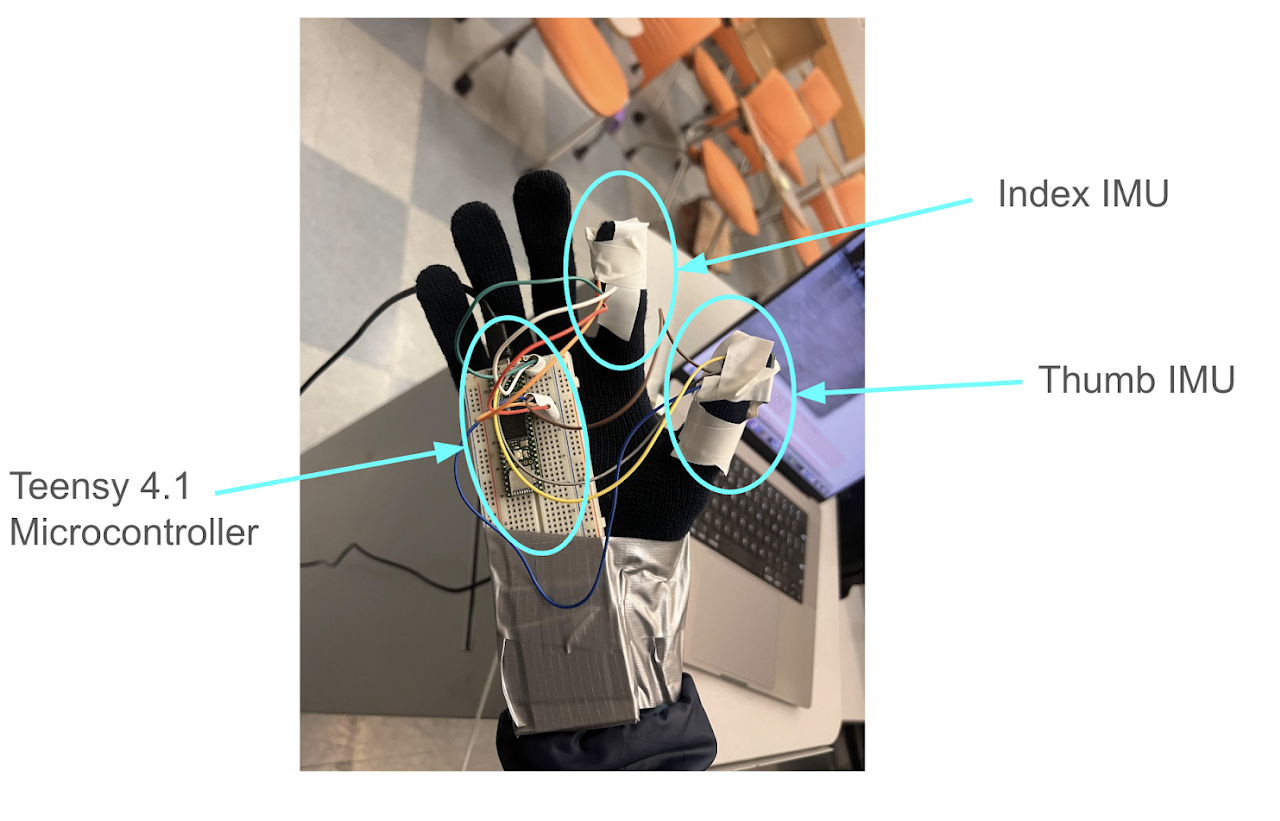

Gesture Detection

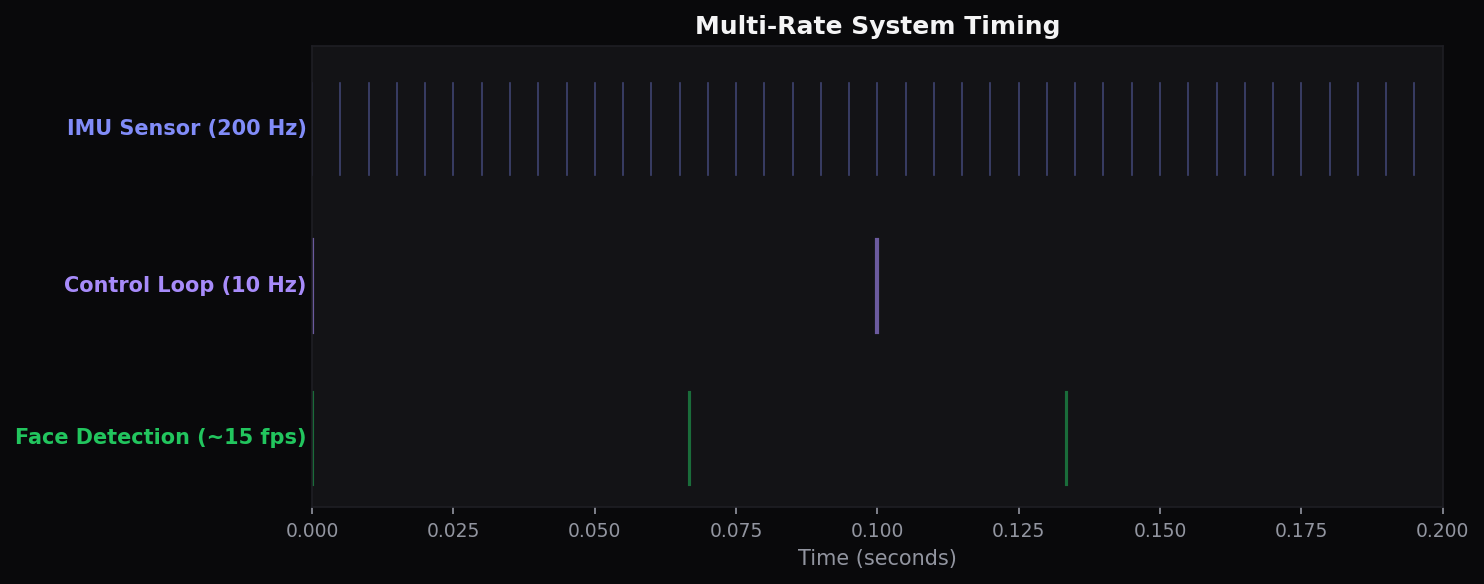

Multi-Rate Timing

Design Decisions

- Haar Cascade over YOLO — Prioritized real-time speed and low computational overhead on the Tello's limited hardware, at the cost of some detection robustness

- PID control over SDK distance commands — The official SDK's discrete movement commands were imprecise; continuous PID-based RC control produces smoother, more responsive tracking

- Bounding box area for depth — Face bounding box pixel area serves as a proxy for distance, avoiding the need for depth sensors while maintaining adequate following behavior

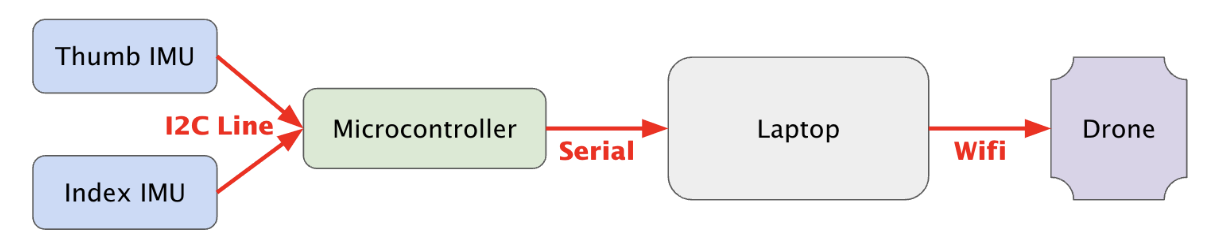

- Decoupled gesture processing — IMU data is processed in a separate process and written to a file that the control loop reads, eliminating synchronization bottlenecks between the 200Hz sensor rate and 10Hz control loop

Tradeoffs & Limitations

- No face identity — Haar Cascade detects all faces equally; in crowded environments the drone may lock onto a bystander instead of the intended target

- Two-command gesture set — Only single-snap and double-snap are recognized; a richer gesture vocabulary could enable takeoff, landing, and tracking-mode changes

- ~10 min flight time — Tello battery constrains the operational window; lightweight face detection was chosen partly to conserve power for longer sessions

- No obstacle avoidance — Indoor furniture and outdoor terrain are not sensed; deferred to future LiDAR / ultrasonic integration

Code Highlights

class PIDController: def update(self, error, dt): self._integral += error * dt self._integral = max(-self.limit, min(self.limit, self._integral)) # anti-windup derivative = (error - self._prev_error) / dt if dt > 0 else 0 self._prev_error = error output = self.kp * error + self.ki * self._integral + self.kd * derivative return max(-self.limit, min(self.limit, output))

class SnapDetector: """Detect snap gestures from dual MPU6050 acceleration differentials.""" def detect(self, accel_1, accel_2, timestamp): diff = abs(accel_1 - accel_2) if self.state == "idle" and diff > self.threshold: # 50,000 units self.state = "detected" self.last_snap = timestamp elif self.state == "detected": if timestamp - self.last_snap > self.window: # 0.7s timeout return self._emit(self.snap_count) # 1=circle, 2=photo

class CommandChannel: """Lock-free IPC via atomic os.replace — no corruption, no locks.""" def write(self, data): tmp = self.path + ".tmp" with open(tmp, "wb") as f: pickle.dump(data, f) os.replace(tmp, self.path) # atomic on POSIX — no partial reads def read_new(self): data = pickle.load(open(self.path, "rb")) return data[self._cursor:] # only unprocessed commands

How It Works

Face Detection: Each video frame is converted to grayscale and processed by a Haar Cascade classifier. When multiple faces are detected, the system selects the largest by bounding box area (the closest person). The face center coordinates and area are passed to the tracking controller.

PID Tracking: Two independent PID controllers compute yaw speed (to center the face horizontally) and vertical speed (to position the face at the upper quarter of the frame). Forward/backward movement uses threshold logic on the bounding box area to maintain the target within a 14,000–15,000 pixel range. When the face is lost for more than 15 consecutive frames, the drone rotates in the last known direction to search.

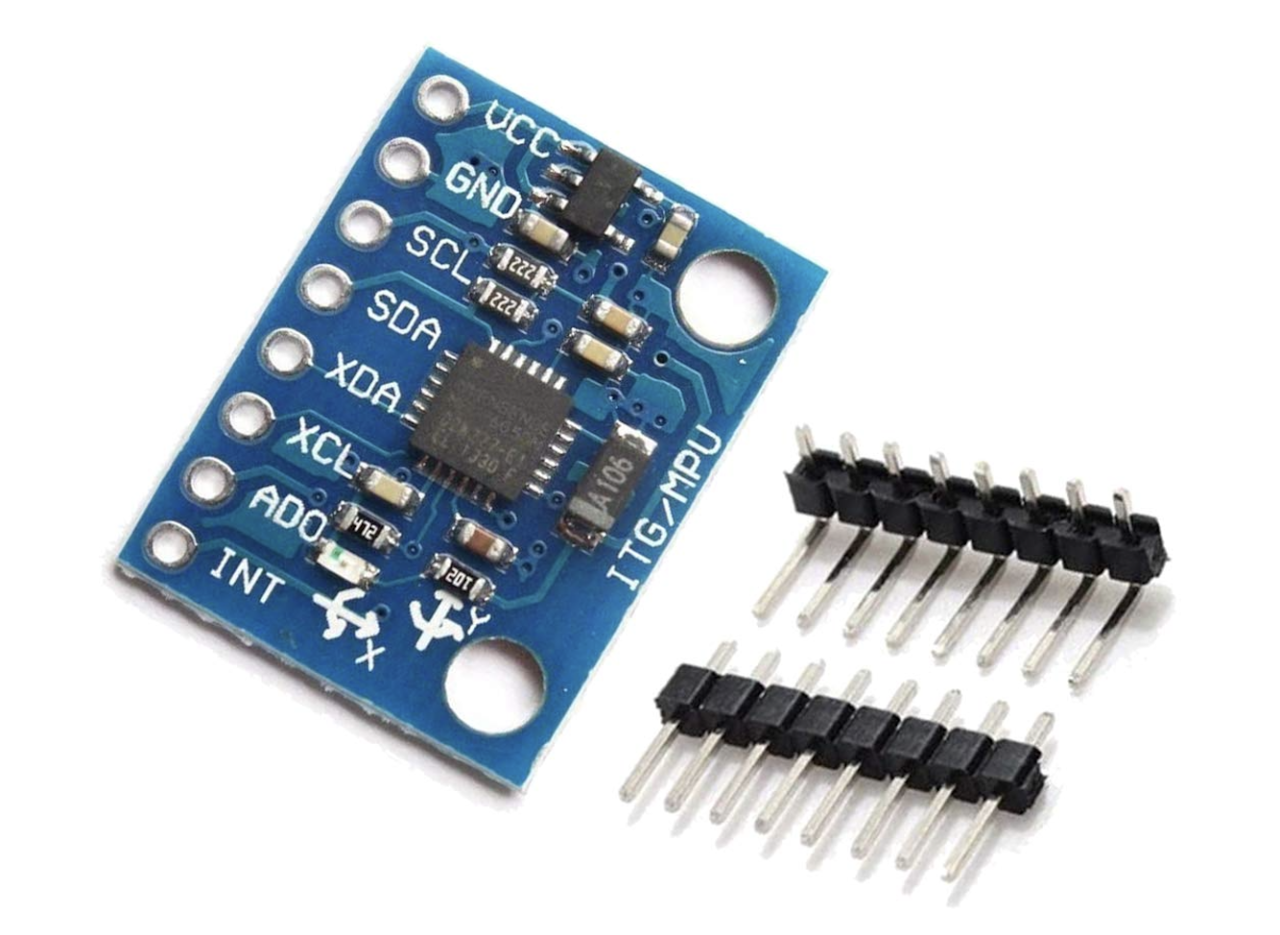

Gesture Recognition: Two MPU6050 IMU sensors mounted on a glove detect snap gestures by monitoring the acceleration differential between sensors. When the difference exceeds a 50,000-unit threshold, a snap is registered. A state machine with hysteresis prevents false positives, and consecutive snaps within a 0.7-second window are grouped into a single gesture command.

Command Pipeline: The gesture process writes snap counts to a pickle cache file using atomic file operations (write to temp file, then os.replace). The tracking process polls for new commands: 1 snap triggers a circular panoramic recording flight, 2 snaps capture a photo. This decoupled architecture allows the 200Hz sensor loop and 10Hz control loop to operate independently.

Hardware

Software Stack

Challenges & Solutions

- Haar Cascade reliability — Face detection drops during rapid movement or poor lighting. Solved with a lost-face recovery system: after 15 frames without detection, the drone rotates toward the last known face direction

- PID controller lag — Long processing in the main control loop caused sluggish response during fast movements. Mitigated by optimizing the loop interval and keeping face detection lightweight

- No magnetometer on IMUs — Without absolute orientation, gesture recognition was redesigned to use snap detection via acceleration differentials rather than hand orientation tracking

- 200Hz sensor vs 10Hz control — The vast rate mismatch was solved with a parallel dual-process architecture: gesture recognition runs independently and communicates results via file IPC, decoupling sensor sampling from drone control

Future Work

- Gesture-based trajectory planning — Using IMU acceleration data to define custom flight paths through hand movements

- Obstacle avoidance — Integrating LiDAR or ultrasonic sensors for safe autonomous navigation in complex environments

- 3D depth estimation — Computing actual distance from bounding box geometry for more precise following behavior

- Enhanced face reacquisition — Adding z-axis scanning and velocity-based prediction for faster target relocation

References

- Viola, P. & Jones, M. (2001). “Rapid Object Detection using a Boosted Cascade of Simple Features.” CVPR. IEEE

- EECS 206A. “Introduction to Robotics.” UC Berkeley, Fall 2024.